The Intelligence Layer: What happens when companies stop using humans to move information around

I have been experimenting with OpenAI's Codex in a new way lately.

Not just as a coding tool. More like a project brain and operating system for consulting work.

For a project, that means giving it all the available raw materials: meeting notes, research, delivery plans, decision logs, draft documents and deliverable templates, reference assets, client context, and the messy thinking that normally sits across notebooks, folders, inboxes, and people's heads.

Then I started asking it to do the work that sits between the work.

Create a database of events and facts. Find the missing assumption. Reconcile two competing versions of the story. Turn a pile of source material into a decision brief. Remember what we decided last week. Show me where the strategy, delivery plan, and risk picture no longer line up. Build the end deliverables. Surface and escalate key information to the relevant people.

If this sounds a little like OpenClaw (the open-source agentic operating system) for consulting, that is basically what it is.

The interesting part is that it started to behave like a thin intelligence layer around the project. It gave the work a memory. It turned tacit context into something more explicit. It made the project easier to reason over. It helped build deliverables and orchestrate connections across people and systems. This builds on a thread I wrote about in my 2025 productivity hacks and what I am testing for 2026, but the pattern now feels much bigger than personal productivity. The team design implications are also profound.

And that has sent me back to a much bigger question and one I have been discussing with other leaders across Australia.

If AI can gather, refine, analyse, and output information between systems and humans, what does the organisational hierarchy of tomorrow look like? And what does the enterprise operating system of tomorrow look like?

AI is starting to change the economics of that work.

It can ingest context, synthesise competing inputs, draft structured outputs, compare evidence, identify gaps, perform quality assurance and move information between systems and humans faster than the meeting cycle can respond.

And it is now raising questions about how we should design the organisational structures that create winning companies.

TL;DR

- At the heart of every company is data. The uncomfortable bit is that most of the most important data has never lived cleanly in a database — it lives in meeting rooms and in people's heads.

- For decades, middle management has been the human knowledge-processing layer of the firm. Hierarchy was the information infrastructure, because there wasn't a better option.

- AI changes the design question. Companies can now wrap their systems of record in an enterprise intelligence layer that captures tacit and explicit data, transforms it, and orchestrates actions between humans, agents, and systems.

- This isn't "AI replaces managers". It's the replacement of the human knowledge-processing layer that management has been doing along with its real job.

- Humans should move upward: understanding, judgement, creativity, taste, ambition, accountability, human connection. Less status-managed. Not managerless.

- The trap is lazy delayering and surveillance dressed up as clarity. The opportunity is organisational clarity and speed we've never been able to design for before.

- The next enterprise operating system is not CRM/ERP plus copilots. It's systems of record wrapped in intelligence, with humans pulled upward into outcomes.

Block's From Hierarchy to Intelligence names the shift well, but I think the deeper point is this: hierarchy has been doing the work of weak organisational memory.

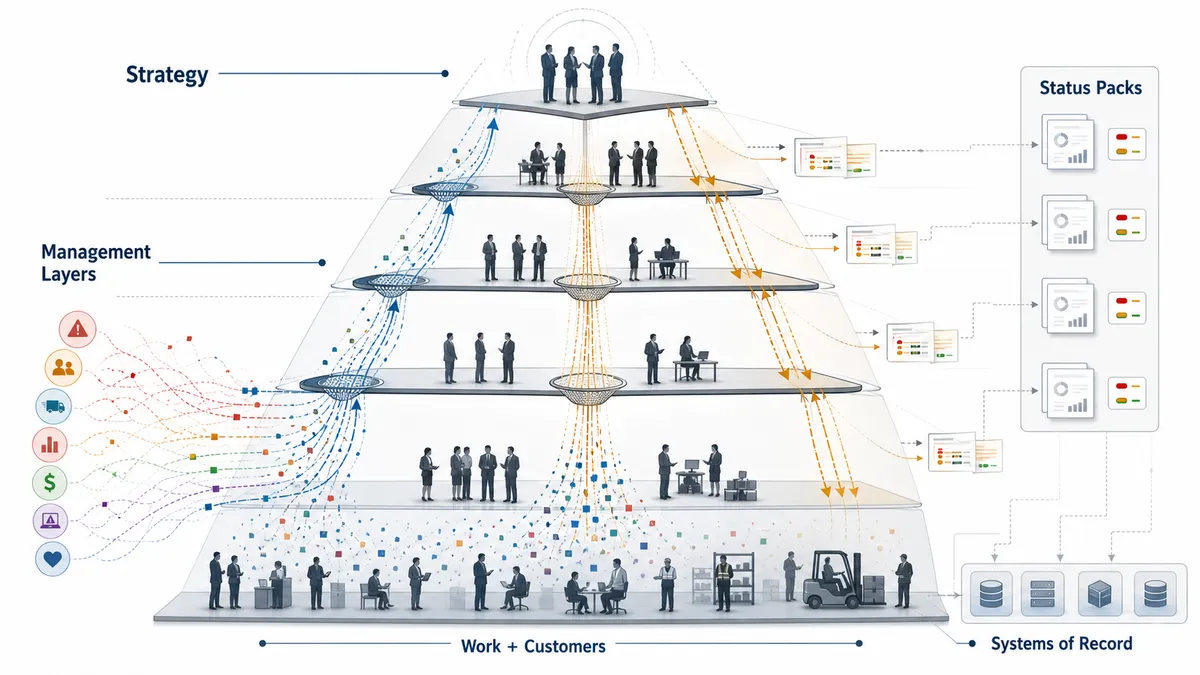

1/ The status pack is a symptom

Let me start with something most enterprise readers will recognise.

A program of work. Four or five streams. Each stream lead assembles a weekly update. Those get aggregated by a PMO into a twenty-slide pack. The pack gets re-coloured twice — once by the program director, once by the sponsor's chief of staff — before it lands in a steerco where six executives read it for the first time in the room.

RAG statuses soften. Decisions get deferred. Risks get rephrased. The deck is polished. And somewhere in the middle of it all, there is a real truth about the program that is known — fully and precisely — by almost no one.

I don’t have a clean benchmark for this, but I’ve seen enough leadership teams to know the pattern. A huge amount of management time is not spent making decisions. It is spent preparing the room so a decision might eventually be made: gathering updates, reconciling versions of the truth, routing context, polishing the pack, and translating reality into something safe enough to present.

So what for enterprise leaders: the status pack is not the problem. The status pack is a symptom. It exists because there is no other reliable way to compress the company's operating state into something a human decision-maker can actually hold in their head. Hierarchy is doing the compression. Sometimes badly, expensively, and politically — but doing it.

The question is what happens when an AI system can consume context across teams — meetings, emails, status reports, system data — and produce a more complete, testable version of the operating truth.

Traditional hierarchy as information transfer

2/ The missing half of company data

At the heart of every company is data. The uncomfortable bit is that most of the important data has never lived cleanly in a database.

There are two kinds of data in every organisation.

The first is formal, structured, system data. CRM records, ERP transactions, HRIS data, finance ledgers, tickets, code repos, customer platforms, risk registers, dashboards, documents, policies, and workflow tools. This is what most "data strategies" are about. It's what vendors sell against. It's what CDOs have traditionally measured.

The second is tacit data. Context. Assumptions. Intent. Politics. Trade-offs. Commitments made on a call. Warnings nobody wrote down. Fears. Relationships. Judgement. Half-formed strategy. The actual reason the Tuesday decision went the way it did. The reason a senior engineer won't touch that service. The reason key customers or internal teams are quietly unhappy.

The meeting room has always been a data store. It has just been a terrible one.

For decades, we've treated only the first kind of data as "data" and relied on humans — mostly managers — to carry the second kind around in their heads and bring it into the right rooms at the right time. That was a reasonable compromise when there was no alternative. It is no longer the only option.

So what for enterprise teams: if your AI strategy only addresses system data, you are automating the less interesting half of the company. The hard part — and the valuable part — is capturing and using the tacit layer without turning the company into a surveillance state. This is where experience, IP, and context live. You could argue this is where a company's real advantage lives: the design taste behind a product or marketing output, the engineering judgement behind a construction decision, the customer-service habits that create a consistently good experience, and the risk instincts that stop a bad call before it becomes visible in a report.

3/ Hierarchy was the old intelligence layer

Every company has an operating system.

Not the one described in the strategy deck. Not the one mapped in the technology architecture. The real one: how decisions are made, how work moves across teams, how lessons are learned, and how reality is translated into action.

That operating system is messy. It is semi-formal, made up of meetings, emails, side conversations, rituals, standard operating procedures, and the handful of processes hardened into systems.

You know this intuitively. When something goes wrong, our systems can usually tell us what happened. We still need people to explain why.

For decades, humans have been the integration layer for that messy middle.

Humans translate decisions, context, requirements, risks, and exceptions between teams and systems. Humans read the signals. Humans summarise the noise. Humans reconcile competing versions of reality. Humans decide what gets escalated, what gets softened, what gets buried, and what gets turned into a twenty-slide pack.

Management organisation theory, from Sloan to Drucker to the post-war consulting playbooks, has largely assumed that knowledge flows through hierarchy. Strategy and intent move down. Signals, problems, and operating truth move up. Middle management is the connective tissue.

Middle management has been the compression algorithm of the firm.

And it has never been neutral. The human layer is shaped by empathy, fear, hope, ambition, loyalty, career risk, fatigue, politics, and the normal mess of being human. Sometimes that makes the organisation more humane. Sometimes it makes the organisation less truthful. Either way, it has never been structured, independent, reliable, or consistently efficacious as an information layer.

This isn't a new observation. Ray Dalio and Bridgewater spent decades trying to codify management, meeting context, and decision-making into something closer to a system — "baseball cards" on people, the Dot Collector, an algorithmic view of who is credible on what. It was clunky, controversial, maybe ahead of its time. But the underlying ambition — turn the meeting room into structured, usable data — predates LLMs by thirty years. Modern AI makes that ambition far more feasible. It does not automatically make it wise.

Block's recent piece, From Hierarchy to Intelligence, reframes how organisations and data should be structured: hierarchy as an information-routing protocol, being replaced by a company world model, a customer world model, reusable capabilities, and an intelligence layer with proper interfaces. It is a bold vision for company design.

It's hard to swallow the Block story whole. The argument is useful, but the context matters: it landed close enough to workforce reductions that it cannot be treated as a neutral operating-model paper. That does not make the idea wrong. It just needs time to play out.

So what for enterprise leaders: when you hear "flatten the org" or "AI-native operating model", ask which problem is actually being solved — information routing, cost, or narrative. They're not the same problem and they don't necessarily have the same answers.

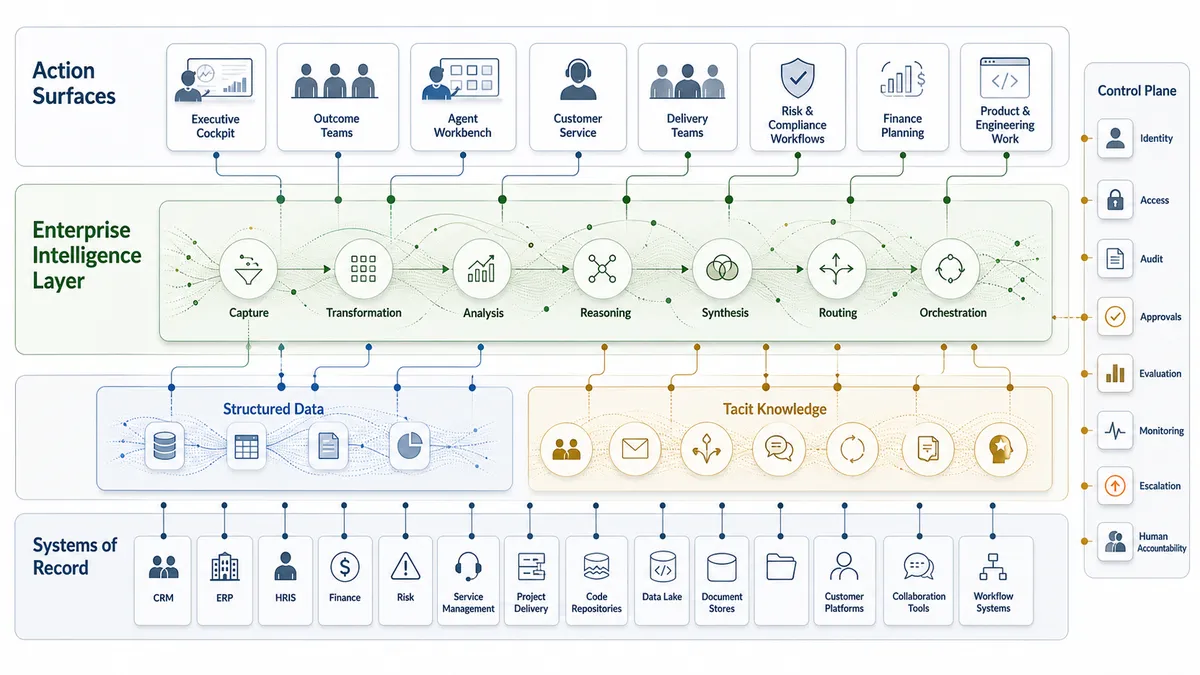

4/ The new intelligence layer

Here's where my thesis lands.

AI, specifically the current generation of frontier models combined with agentic harnesses like Claude Code, Codex, and Google Agentspace, plus protocols like MCP together with enterprise-specific agentic memory, procedures and controls, can now do something the old stack could not.

It can ingest meetings, documents, systems, tickets, decisions, risks, commitments, and customer signals. It can transform those into briefs, plans, actions, escalations, and system updates. It can orchestrate work across humans, agents, and systems in near real time. Humans don't just prompt an AI to orchestrate an action, an AI can trigger a human to do the same.

That is a genuinely different shape. The old enterprise stack had systems of record with applications and management routines layered on top. The new enterprise stack has the potential to wrap those systems in an intelligence layer — one that captures formal and tacit data, refines it, reasons over it, and routes outputs to whoever (or whatever) should act on them next.

In practice, I can already see the shape of the workflows across AI-enabled projects and larger programmes. The repo as an operating system: assets/ for raw material, a registry.md or a Postgres database that tracks key data, a continuity.md that carries state across sessions, design docs for epics and definitions of done, and a set of harvested skills that the agent can reuse. The repo is not just code. It's a small, opinionated world model. Claude Code and Codex both work across it because it's legible to both of them, and it's been designed for them as much as humans.

Now imagine that shape at enterprise scale. Not a repo — a company world model and a customer world model, as Block puts it, sitting over Salesforce, Workday, ServiceNow, Jira, SAP, your data lake, your Slack, and your meeting transcripts, with an intelligence layer on top doing the knowledge processing and orchestration that middle management has been doing manually.

Microsoft's 2025 Work Trend Index calls the emerging shape "AI-operated, human-led" — human-agent teams, "agent bosses", and real pressure on the traditional org chart. BCG and MIT Sloan's Managing the Machines That Manage Themselves frames agentic AI as a managerial and operating-model shift, not a productivity tool. Their survey data is directional, but the framing is the important bit: this is an operating-model question, not an IT procurement question.

A lot of knowledge work is not going away. It is moving location. It's moving out of the human layer and into the intelligence layer, where it can be done at pace, consistently, and with an audit trail.

So what for enterprise teams: if your AI roadmap is a list of copilots bolted onto existing apps, you're optimising the edges. The centre of gravity is the intelligence layer itself — the thing that reads, reasons, and routes across everything. That is where the next generation of enterprise value will sit, and most organisations don't have an owner for it yet.

5/ Humans move up the stack

If the intelligence layer does the routing, summarising, status-pack assembling, and memory-cache work, what are humans for?

The uncomfortable answer is that much of the work that moves is the work we have mistaken for management: collecting context, translating it, packaging it, and pushing it through the organisation until someone is ready to act.

We do not need humans to be the routers, summarists, status-pack assemblers, and memory caches of the company. We need humans to understand, decide, commit, create, connect, and own the consequences.

That distinction matters. I am not saying companies no longer need humans to think. I am saying a surprising amount of what we have called thinking at work is actually processing: collecting fragments, compressing them, translating them, formatting them, and moving them to the next room.

Understanding is different.

Judgement is different.

Accountability is different.

I saw a great quote today that I think sums up the shift:

There are a few places where the shift will occur across organisations:

Moving into the intelligence layer: the repeatable knowledge-processing work across the enterprise: gathering information, reconciling versions of the truth, summarising meetings, drafting briefs, assembling dashboards, coordinating workflows, translating context across teams, triaging escalations, generating code and tests, analysing data, synthesising research, interpreting policy, supporting security and compliance reviews, preparing customer and account briefs, explaining finance variances, summarising HR cases, comparing procurement options, and handling routine operational exceptions.

Moving upward, into humans: strategy and mission alignment, taste and judgement calls, creative direction, coaching and development of people, ambition-setting, difficult conversations, customer relationships, ethical and political calls, accountability for outcomes, and the long-horizon thinking the machine still can't do well.

This is not anti-manager. The point is that hierarchy has been used as information infrastructure because there wasn't a better option. Management — as a craft of coaching, judgement, accountability, and human leadership — is not going anywhere.

The future is not managerless. But it is much less dependent on humans pretending to be middleware.

So what for enterprise teams: the question to ask of every role in the leadership layers is where is the person spending their cognitive energy? If it's mostly processing, the role needs redesign. If it's mostly understanding, creating, deciding, coaching, and owning, the role is doing what it should — and the intelligence layer should be making that person sharper, not redundant.

6/ What the operating model actually looks like

I'm hedging a lot in this section because nobody has fully built this yet at scale. From what I'm seeing across my own work, and in the credible public reporting, the emerging shape has roughly these components.

Old model: systems of record at the bottom, applications on top, and humans in the middle trying to make sense of it all.

New model: systems of record still matter, but the intelligence layer wraps around them — ingesting signals, maintaining context, routing action, and asking humans to approve or decide where judgement matters.

1 – A work graph. A living representation of the actual work of the company — objectives, initiatives, commitments, risks, decisions, dependencies — that both humans and agents can read and update. Think of it as the company's shared memory, not another dashboard.

2 – World models. A company world model (how we operate, what we sell, how we make money, who does what) and a customer world model (who they are, what they need, what we've promised, what we've learned). Block's framing here is useful. These are living artefacts, not wikis.

3 – Reusable capabilities. Things like "draft a board paper", "reconcile two risk registers", "prepare a customer renewal brief", "triage incoming incidents in our risk tolerance". Written once, governed once, used everywhere. I think of these as enterprise-grade versions of the harvested skills we keep in our AI workflows today.

4 – Agent supervisors and player-coaches. Humans whose role is to supervise agent work, set direction, catch failure modes, and coach both the agents and the people working alongside them. McKinsey's work on the agentic organisation calls this "humans above the loop". They are really "player-coaches" as these people still do real work; they're not pure overseers.

5 – Domain stewards. Named humans accountable for the integrity of a domain of the world model — customer, finance, risk, product, people. Not data custodians in the old sense. Stewards of meaning.

6 – A control plane. Identity, permissions, audit, evaluation, and governance for agents. This is the unsexy bit. It is also the bit that decides whether this works or blows up in public. Salesforce's Headless 360, Google's Agentspace, and Anthropic's MCP are all early signals of what this layer looks like — systems becoming agent-readable and tool-callable through governed interfaces.

7 – Decision rights, rewritten. The biggest change. If agents can execute, humans need clearer boundaries on what they own, what they approve, and what they delegate. Many organisation have never written these down in detail even for humans. The agentic era of AI will force the conversation.

So what for enterprise leaders: you cannot buy this as a product. You can buy pieces. The integration is the work, and the integration is mostly about decision rights, accountability, and meaning — not technology. Which is a polite way of saying the CIO cannot deliver this alone.

7/ Where this goes wrong

All of this is starting to emerge and there is a lot that could go wrong in the next few years.

Surveillance dressed up as clarity. The dangerous version of this is recording every meeting, indexing every Slack, and using the intelligence layer to monitor people. The useful version is organisational clarity — surfacing commitments, risks, and decisions so the company can act. Same technology. Very different operating model. Very different culture. Leaders need to pick, publicly, and be accountable for the pick.

Lazy delayering. Removing management layers without redesigning decision rights, coaching, escalation, trust, and governance does not create a faster company. It creates unmanaged work with better summaries. The summaries hide the mess for a quarter or two, and then the wheels come off. I'd bet on at least a couple of high-profile Australian examples of this by 2027.

Brittle automation. Agentic workflows fail in ways that classic automation does not. They fail plausibly — producing output that looks correct but isn't. Without evaluation, audit, and proper human checkpoints on consequential actions, this gets expensive. Fast.

Political misuse. Whoever controls the intelligence layer controls the narrative about how the company is performing. That is a concentration of power we haven't really thought about. Boards in particular should be asking harder questions about who owns the company world model and what biases are baked into it.

Loss of coaching and craft. If junior people stop doing the knowledge-processing work entirely, where do they learn judgement? I don't have a clean answer here. I suspect part of the answer is deliberate — new graduate programs designed around working with agents from day one, explicit apprenticeship in judgement and taste, and senior people spending more time teaching, not less. The answer may lie in giving great juniors more agency, purpose, and space to drive real outcomes with these new tools.

Unclear accountability. When an agent takes an action and it goes wrong, who owns it? The human supervisor? The platform team? The steward? Legally and culturally, we don't have settled answers.

So what for enterprise leaders: build the governance as you build the scale. Unglamorous but non-negotiable.

8/ The executive question

The next enterprise operating system is not CRM plus ERP plus a few copilots. It is systems of record wrapped in an intelligence layer, with a work graph, world models, reusable capabilities, agent supervisors, domain stewards, and a control plane — and with humans pulled upward into outcome ownership, coaching, judgement, and human-to-human connection.

Enterprise intelligence layer architecture

That is not just a different architecture, it is a different theory of the firm.

The companies that get this right will move faster, see themselves more clearly, and free up their best people to do the work only humans can do. The companies that get it wrong will either surveil themselves into a morale crisis or delayer themselves into a quiet collapse eighteen months later.

This will not replace the human parts of management. Coaching a team through a hard quarter, holding someone accountable with care, making a risky call with imperfect information, building trust with a customer — none of that moves into the intelligence layer, and I don't think we want it to.

What moves is the middleware. The bit we've been asking humans to do because the systems couldn't.

We should let them stop.

Try this week

A few concrete things, if you want to start feeling the shape of this rather than just reading about it:

- Map your own knowledge processing. For one week, track how much of your time is gathering, reconciling, summarising, and routing information versus understanding, deciding, creating, coaching, and owning. Be honest.

- Pick one recurring status artefact — a weekly pack, a monthly report, a steerco input — and prototype its replacement with an agent over your source systems plus a meeting transcript. See what breaks. See what gets sharper. Claude Code or Codex app against a small repo of source material is a reasonable starting point.

- Write down the decision rights for one function you own. Who decides, who approves, who executes, who is informed. Most organisations have never written this down. The intelligence layer will force the conversation; better to start it on your own terms.

- Name a domain steward for one of your world-model domains — customer, risk, product, people. Give them a mandate to own the meaning, not just the data.

- Ask your leadership team one question: if we had an intelligence layer that did 60% of our knowledge processing, what would we do with the time? The answer might surprise you. There is value everywhere if we can move knowledge processing out of the workflow and into the intelligence layer.

I am increasingly convinced that the story of the next five years in enterprise technology is not just about AI replacing tasks. It is about AI replacing the middleware we have been asking humans to be, and forcing a rethink of how companies are organised.

The companies that handle that well will not become less human.

They will need more connected, more empowered teams, led by people who spend less time moving information around and more time making meaning, building trust, and owning outcomes.